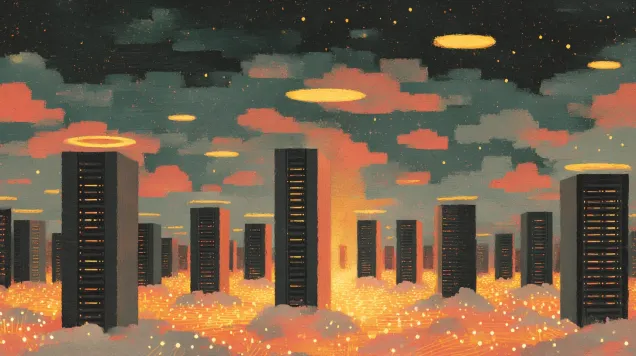

The Data Center Moratorium Movement: How Bernie Sanders and Ron DeSantis Found Common Ground

Senator Bernie Sanders became the first federal legislator to call for a national moratorium on AI data center construction. His unlikely alignment with Governor Ron DeSantis reflects deepening politi...