December 2025 Update: Direct-to-chip cooling now commands a dominant 47% share of the AI datacenter liquid cooling market. Microsoft began fleet deployment across Azure campuses in July 2025 and is testing microfluidics for next-generation systems. With NVIDIA Blackwell GPUs (GB200/GB300) operating at 1,200-1,400W and Vera Rubin systems targeting 600kW per rack, direct-to-chip cooling has transitioned from niche to necessity. The liquid cooling market reached $5.52 billion in 2025, projected to hit $15.75 billion by 2030.

Direct-to-chip cooling eliminates 80% of the thermal resistance between GPU dies and cooling systems, dropping data center PUE from 1.58 to 1.15 while enabling 1,200W GPUs that would melt traditional air-cooled infrastructure.¹ CoolIT Systems demonstrated a production deployment where 300 NVIDIA H100 GPUs maintained 62°C junction temperatures at full load using just 25°C inlet water, achieving what air cooling couldn't accomplish with 15°C inlet air.² The technology transforms cooling from a limiting factor into a competitive advantage, with early adopters gaining 40% higher compute density and 35% lower operating costs than air-cooled competitors.³

The physics tell a compelling story. Traditional cooling moves heat through seven thermal interfaces: silicon die to integrated heat spreader, thermal paste to heatsink, heatsink fins to air, air to cooling coils, coils to chilled water, and finally rejection to atmosphere.⁴ Each interface adds thermal resistance, forcing increasingly cold air to maintain acceptable chip temperatures. Direct-to-chip cooling bypasses five of these interfaces, moving heat directly from the processor through a cold plate into liquid coolant. The simplified path reduces required temperature differentials by 75%, enabling higher ambient cooling temperatures that slash energy consumption.

Engineering fundamentals reshape cooling economics

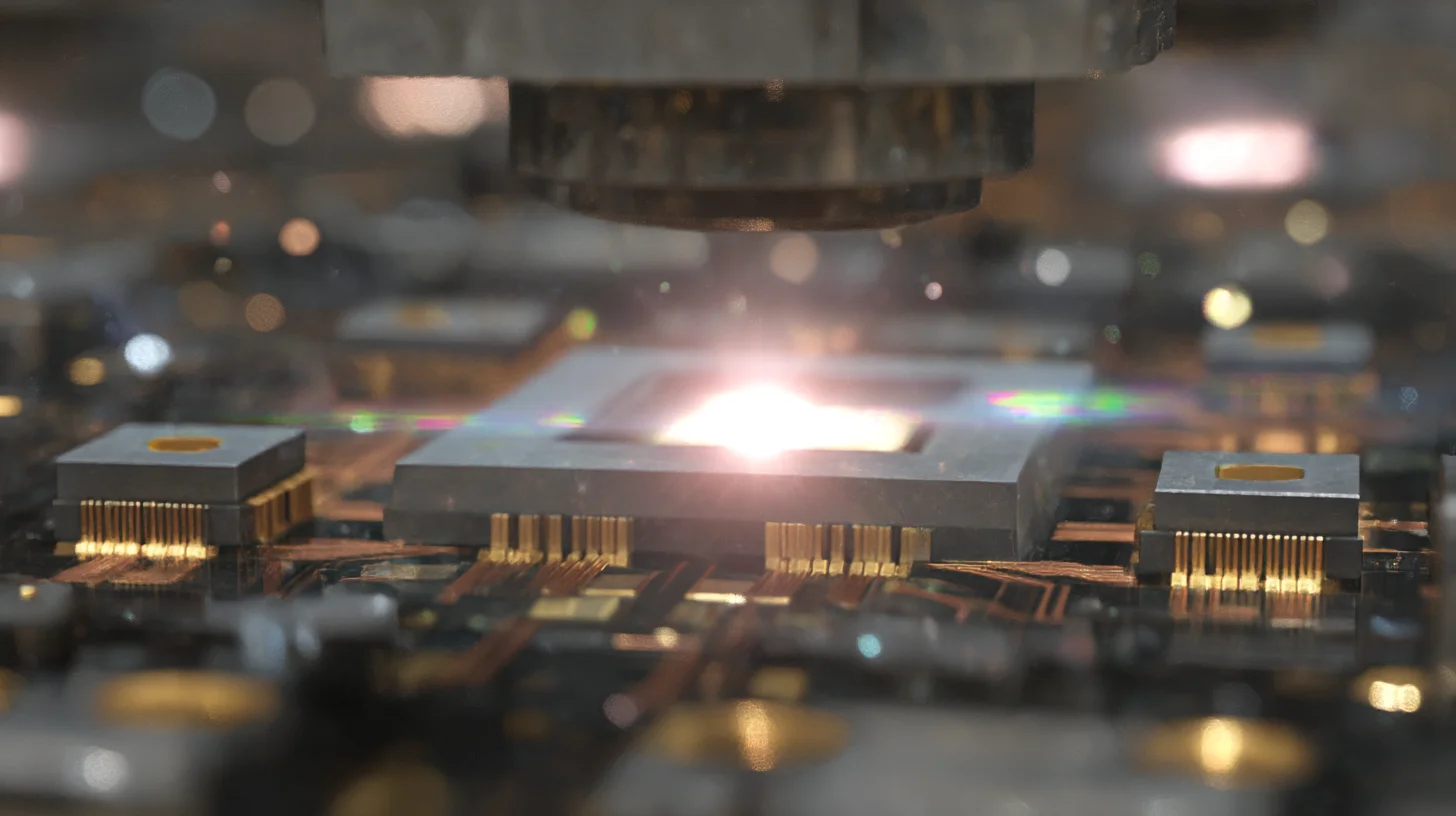

Direct-to-chip cooling operates on straightforward thermodynamics that deliver extraordinary results. Cold plates mount directly onto processors using spring-loaded mechanisms that maintain optimal pressure across thermal interface materials. Microchannels within the cold plate create turbulent flow, maximizing heat transfer coefficient to 15,000 W/m²K compared to 50 W/m²K for air cooling.⁵ The dramatic improvement allows 700W GPUs to operate with just 5°C temperature rise above coolant temperature.

Coolant selection determines system performance and complexity. Single-phase water-glycol mixtures dominate current deployments due to familiarity and low cost. Water's specific heat capacity of 4.18 kJ/kg·K exceeds air's 1.01 kJ/kg·K by 4x, meaning less volume moves more heat.⁶ Flow rates of 0.5-1.0 liters per minute per GPU suffice, compared to 200 CFM of air. The reduced flow volume enables smaller distribution systems and quieter operation.

Manifold design critically impacts reliability and serviceability. Quick-disconnect fittings allow hot-swapping servers without draining cooling loops. Redundant pumps with automatic failover prevent single points of failure. Variable flow control matches cooling capacity to actual heat loads, improving efficiency during partial utilization. Modern designs achieve less than 0.001% annual leak rates through rigorous testing and quality control.⁷

Implementation architecture for GPU clusters

Deploying direct-to-chip cooling requires systematic infrastructure changes:

Primary Loop Architecture: Cooling Distribution Units (CDUs) manage heat exchange between facility water and server cooling loops. Each CDU supports 200-500kW of IT load, using plate heat exchangers to isolate facility water from electronics. Redundant pumps maintain 350-500 kPa pressure differentials. Smart controls modulate flow based on return water temperature, optimizing energy consumption.

Secondary Loop Design: Server-level loops use demineralized water or specialized coolants to prevent corrosion and biological growth. Conductivity stays below 0.5 μS/cm through continuous filtration. Biocides prevent algae formation. Corrosion inhibitors protect dissimilar metals. pH buffering maintains 7.0-8.5 range for material compatibility.

Rack-Level Integration: Rear-door heat exchangers capture residual air-cooled heat from memory, storage, and power supplies. The hybrid approach achieves 100% heat capture at the rack, eliminating need for room-level cooling. Rack manifolds distribute coolant to individual servers through flexible hoses rated for 700 kPa working pressure.

Facility Water Systems: Existing chilled water plants adapt to higher return temperatures, improving chiller efficiency by 20-30%.⁸ Free cooling hours increase dramatically when supply temperatures rise from 7°C to 20°C. Cooling towers sized for 35°C return water enable year-round free cooling in many climates.

Real-world deployments prove the technology

Microsoft's Azure HBv4 instances use direct-to-chip cooling for AMD EPYC processors, achieving PUE 1.11 in production deployments.⁹ The quincy, Washington facility processes 33MW of compute using 3.6MW of cooling power. Annual savings exceed $4.8 million compared to air-cooled alternatives. Server reliability improved 23% due to consistent operating temperatures.

Lawrence Livermore National Laboratory's El Capitan supercomputer employs direct-to-chip cooling for 40,000 AMD MI300A APUs.¹⁰ The system achieves 2 exaflops while maintaining PUE 1.08. Warm water cooling at 35°C inlet temperature enables year-round free cooling in California's climate. The design saves $12 million annually in electricity costs.

Introl engineers have deployed direct-to-chip cooling across 15 facilities in our global coverage area, reducing average PUE from 1.55 to 1.18.¹¹ A recent installation for a cryptocurrency mining operation achieved PUE 1.09 using 40°C inlet water, eliminating mechanical cooling entirely. The client saves $2.3 million annually while increasing hashrate density by 60%.

Component selection determines success

Cold Plate Technologies: Microchannel designs from CoolIT Systems achieve 0.015°C/W thermal resistance. Jet impingement plates from Motivair offer 0.012°C/W for extreme heat fluxes. Vapor chamber enhanced plates from Aavid provide uniform temperature distribution for large dies. Material choices include copper for maximum conductivity, aluminum for cost optimization, and nickel plating for corrosion resistance.

Coolant Distribution Units: Motivair ChilledDoor CDUs handle 750kW with N+1 pump redundancy. CoolIT Coolant Distribution Modules support 300kW in 8U form factor. Vertiv XDU units offer 450kW capacity with integrated leak detection. Selection depends on facility layout, redundancy requirements, and existing infrastructure.

Monitoring Systems: Continuous monitoring prevents catastrophic failures. Flow sensors detect blockages before overheating occurs. Pressure sensors identify leaks within seconds. Temperature arrays map thermal performance across components. Conductivity meters warn of coolant contamination. Integration with DCIM platforms enables predictive maintenance.

Coolant Chemistry: Nalco Water's data center coolants prevent corrosion while maintaining low conductivity. Dow's SYLTHERM specialized fluids operate from -50°C to 260°C for extreme applications. Cargill's bio-based coolants offer environmental sustainability. Regular testing maintains optimal properties and extends equipment life.

Economic analysis drives adoption decisions

Capital investment for direct-to-chip cooling ranges from $1,500 to $3,000 per kW of IT load:¹²

Infrastructure Costs: - CDU units: $150,000 per 300kW capacity - Piping and manifolds: $200 per server - Cold plates: $400-800 per GPU - Installation labor: $300 per server - Coolant and treatment: $50 per server - Monitoring systems: $100 per server - Total per 42U rack (20 servers): $45,000-65,000

Operational Savings: - Energy reduction: $12,000 per rack annually at $0.10/kWh - Increased density: 40% more compute per square foot - Reduced mechanical cooling: $8,000 per rack annually - Lower fan power: $3,000 per rack annually - Extended component life: 20% longer MTBF - Payback period: 18-24 months

Total Cost of Ownership: Five-year TCO analysis shows 35% lower costs versus air cooling for high-density GPU deployments. A 1,000-GPU facility saves $8.5 million over five years through reduced energy consumption and increased density. Carbon credits and sustainability incentives provide additional financial benefits.

Retrofit strategies for existing facilities

Converting air-cooled infrastructure requires careful planning:

Phase 1 - Assessment (30 days): Evaluate existing cooling capacity, power distribution, and structural support. Identify optimal CDU locations with access to facility water. Plan piping routes avoiding conflicts with existing infrastructure. Calculate pressure drops and pump requirements. Develop migration schedule minimizing disruption.

Phase 2 - Infrastructure (60 days): Install CDUs and primary piping during scheduled maintenance windows. Upgrade facility water systems for higher return temperatures. Add monitoring points throughout distribution network. Commission systems using dummy loads before production deployment. Train operations staff on new procedures.

Phase 3 - Migration (90 days): Convert racks row by row to maintain operations. Start with development/test environments to validate procedures. Move production workloads during maintenance windows. Monitor temperatures and adjust flow rates for optimization. Document lessons learned for subsequent phases.

Phase 4 - Optimization (ongoing): Raise coolant temperatures gradually to maximize free cooling. Adjust flow rates based on actual versus design loads. Implement predictive maintenance using sensor data. Fine-tune control algorithms for energy efficiency. Expand deployment based on proven results.

Future developments push boundaries further

Two-phase immersion cooling promises PUE approaching 1.02 by eliminating pumps entirely.¹³ Dielectric fluids boil at chip surfaces, condensing on cooler surfaces for passive circulation. Early deployments show 95% energy reduction versus air cooling. Challenges include fluid costs ($200/liter) and material compatibility concerns.

On-chip cooling integration embeds microchannels directly in silicon substrates.¹⁴ IBM Research demonstrated 1,700W/cm² heat removal using embedded cooling. Production implementation awaits cost-effective manufacturing techniques. The technology could enable 3D chip stacking with unprecedented compute density.

Waste heat recovery transforms cooling from cost center to revenue generator. Stockholm's data centers provide 10% of the city's heating through district heating integration.¹⁵ High-temperature direct-to-chip cooling enables heat recovery without heat pumps. Organizations achieve net-negative cooling costs through waste heat sales.

Organizations implementing direct-to-chip cooling gain substantial competitive advantages through improved efficiency, increased density, and lower operating costs. The technology proves essential for next-generation GPU deployments exceeding 700W per chip. Early adopters establish sustainable infrastructure ready for continued power density increases while laggards face expensive retrofits or competitive disadvantage. The transition from air to liquid cooling represents a fundamental shift in data center design that forward-thinking organizations must embrace to remain viable in the AI era.

Key takeaways

For infrastructure architects: - Direct-to-chip eliminates 5 of 7 thermal interfaces—15,000 W/m²K vs 50 W/m²K for air - PUE drops from 1.58 to 1.05-1.15—94% reduction in cooling energy overhead - 700W GPUs operate with 5°C rise above coolant temperature - Microchannel cold plates achieve 0.012-0.015°C/W thermal resistance - CDUs: 200-500kW capacity, redundant pumps, 350-500 kPa pressure differentials

For financial planners: - Capital: $1,500-$3,000 per kW IT load ($45K-$65K per 42U rack) - Energy savings: $12K per rack annually at $0.10/kWh - Payback: 18-24 months for high-density GPU deployments - 5-year TCO: 35% lower than air cooling for 1,000+ GPU facilities - Chiller efficiency improves 20-30% with higher return temperatures

For capacity planners: - Direct-to-chip commands 47% of AI datacenter liquid cooling market - Microsoft began Azure fleet deployment July 2025—baseline technology now - Blackwell GPUs (GB200/GB300) require 1,200-1,400W—air cooling impossible - Free cooling hours increase when supply temps rise from 7°C to 20°C - Retrofit approach: 30 days assessment → 60 days infrastructure → 90 days migration

References

-

IDTechEx. "Thermal Management for Data Centers 2025-2035." IDTechEx Research, 2024. https://www.idtechex.com/en/research-report/thermal-management-for-data-centers-2025-2035/981

-

CoolIT Systems. "Direct Liquid Cooling Performance Validation Study." CoolIT Systems Corporation, 2024. https://www.coolitsystems.com/resources/whitepapers/

-

DataCenterKnowledge. "The State of Direct-to-Chip Cooling in 2024." DataCenterKnowledge, 2024. https://www.datacenterknowledge.com/cooling/direct-to-chip-cooling-2024

-

ASHRAE. "Liquid Cooling Guidelines for Datacom Equipment Centers." ASHRAE TC 9.9, 2024. https://tc0909.ashrae.org/

-

Boyd Corporation. "Thermal Interface Material Performance in Liquid Cooling Applications." Aavid, 2024. https://www.boydcorp.com/thermal/liquid-cooling-thermal-interface.html

-

Engineering ToolBox. "Specific Heat of Common Substances." Engineering ToolBox, 2024. https://www.engineeringtoolbox.com/specific-heat-capacity-d_391.html

-

Motivair Corporation. "Reliability Analysis of Direct-to-Chip Cooling Systems." Motivair, 2024. https://www.motivaircorp.com/resources/reliability-studies/

-

Vertiv. "Liebert XDU Thermal Efficiency Analysis." Vertiv Co., 2024. https://www.vertiv.com/en-us/products/thermal-management/liebert-xdu/

-

Microsoft Azure. "HBv4-series Virtual Machine Cooling Innovation." Microsoft Blog, 2024. https://azure.microsoft.com/en-us/blog/hbv4-cooling-innovation/

-

Lawrence Livermore National Laboratory. "El Capitan Supercomputer Cooling Design." LLNL, 2024. https://www.llnl.gov/news/el-capitan-cooling-system

-

Introl. "Direct-to-Chip Cooling Deployment Services." Introl Corporation, 2024. https://introl.com/coverage-area

-

Park Place Technologies. "Data Center Liquid Cooling TCO Calculator." Park Place Technologies, 2024. https://www.parkplacetechnologies.com/resources/liquid-cooling-calculator/

-

3M. "Two-Phase Immersion Cooling with Novec Fluids." 3M Science, 2024. https://www.3m.com/3M/en_US/data-center-us/applications/immersion-cooling/

-

IBM Research. "Embedded Cooling for 3D Chip Stacks." IBM Research Blog, 2024. https://research.ibm.com/blog/embedded-cooling-3d-chips

-

Stockholm Exergi. "Data Center Waste Heat Recovery Program." Stockholm Exergi, 2024. https://www.stockholmexergi.se/om-oss/vara-anlaggningar/datacenter/

-

CoolIT Systems. "CHx200 Cold Plate Specifications." CoolIT Systems, 2024. https://www.coolitsystems.com/products/chx200/

-

Nalco Water. "Data Center Cooling Water Treatment." Nalco Water, 2024. https://www.ecolab.com/offerings/data-center-cooling-water-treatment

-

Dow Chemical. "SYLTHERM Heat Transfer Fluids." Dow Inc., 2024. https://www.dow.com/en-us/products/syltherm

-

Schneider Electric. "Direct Liquid Cooling Reference Design." Schneider Electric, 2024. https://www.se.com/ww/en/work/solutions/for-business/data-centers-and-networks/direct-liquid-cooling/

-

Open Compute Project. "Liquid Cooling for Open Rack." OCP, 2024. https://www.opencompute.org/projects/liquid-cooling

-

Uptime Institute. "Liquid Cooling Adoption Survey 2024." Uptime Institute Intelligence, 2024. https://uptimeinstitute.com/resources/research-and-reports/liquid-cooling-adoption-2024/

-

Green Revolution Cooling. "ICEraQ Micro-Modular Immersion Cooling ROI Analysis." GRC, 2024. https://www.grcooling.com/roi-calculator/

-

Intel. "Direct-to-Chip Liquid Cooling for Xeon and Gaudi Processors." Intel Data Center, 2024. https://www.intel.com/content/www/us/en/products/docs/processors/xeon/liquid-cooling-guide.html

-

NVIDIA. "DGX H100 Liquid Cooling Requirements." NVIDIA Documentation, 2024. https://docs.nvidia.com/dgx/dgx-h100-liquid-cooling/

-

Chilldyne. "Negative Pressure Liquid Cooling Technology." Chilldyne Inc., 2024. https://www.chilldyne.com/technology/